书和PPT看得我贼晕,贼累,自己找来数据集写(抄)了代码测试。

目录:

代码实现

每当看那些机翻的书,我向来比较喜欢先看代码,先跑一遍再说,不然真的好容易睡着。

代码需要装numpy和opencv的库,可以先提前pip装好。数据集使用的是The CIFAR-10 dataset中的test_batch和data_batch_1。

代码中的K需要自己设置参数。

来看看下面KNN做图片分类的代码:

import numpy as np

import cv2 as cv

import _pickle as pickle

import heapq

def load_CIFAR10(filename):

"""载入cifar数据集的一个batch"""

training_file = filename + "/data_batch_1"

testing_file = filename + "/test_batch"

# 载入训练集

with open(training_file, 'rb') as f:

data = pickle.load(f, encoding='iso-8859-1')

Xtr = data['data']

Xtr = Xtr[:300]

Ytr = data['labels']

Ytr = Ytr[:300]

# print("len:", len(Xtr[0]))

# 载入测试集

with open(testing_file, 'rb') as f:

data = pickle.load(f, encoding='iso-8859-1')

Xte = data['data']

Xte = Xte[:300]

Yte = data['labels']

Yte = Yte[:300]

return Xtr, Ytr, Xte[0], Yte[0]

class NearestNeighbor(object):

def __init__(self):

pass

def train(self, X, Y):

self.Xtr = X

self.Ytr = Y

def predict_L1(self, X):

num_test = X.shape[0]

Ypred = np.zeros(num_test)

img_distance = []

for i in range(num_test):

# print("i: " ,i)

distance = np.sum(np.abs(self.Xtr[i] - X))

img_distance.append(distance)

# 取出前K个最小的值

min_dist = map(img_distance.index, heapq.nsmallest(K, img_distance))

for i in list(min_dist):

Ypred[i] = Ytr[i]

print("Ypred: ", Ypred)

label_count = []

for i in range(0, 9):

label_count.append(list(Ypred).count(i))

label = np.argmax(label_count)

return label

def predict_L2(self, X, K):

num_test = self.Xtr.shape[0]

Ypred = np.zeros(num_test)

img_distance = []

for i in range(num_test):

# print("i: " ,i)

distance = np.sqrt(np.sum(np.square(self.Xtr[i] - X)))

img_distance.append(distance)

# 取出前K个最小的值

min_dist = map(img_distance.index, heapq.nsmallest(K, img_distance))

for i in list(min_dist):

Ypred[i] = Ytr[i]

print("Ypred: ", Ypred)

label_count = []

for i in range(0, 9):

label_count.append(list(Ypred).count(i))

label = np.argmax(label_count)

return label

Xtr, Ytr, Xte, Yte = load_CIFAR10("./data/cifar-10")

# Xtr_rows = Xtr.reshape(Xtr.shape[0], 32 * 32 * 3)

# Xte_rows = Xte.reshape(Xtr.shape[0], 32 * 32 * 3)

# print("Xte_rows:", Xte_rows)

nn = NearestNeighbor()

nn.train(Xtr, Ytr)

Yte_predict = nn.predict_L2(Xte, 5)

print("Xtr: ", Xtr.shape)

print(Yte_predict)

print('accuracy: %f' % (np.mean(Yte_predict == Yte)))

概念原理

KNN的思想其实很朴素:当没有一个描述数据的模型,那么最好的事情就是观察相似的数据并且把它们选择成同一类。

KNN算法中要对数据分类,就对每个新的输入实例,在训练数据集中找到与该实例最邻近的K个实例,这K个实例中也许会有许多类数据,那么哪个类别的数据最多,就把新的输入实例归于哪个类别。

度量距离

上面说了需要找实例的neighbors,那么就需要度量距离,常见的度量方式有两种:

- 曼哈顿距离(Manhattan distance)

曼哈顿距离可以将像素图片理解成n宫格,将两张图片的n个格子一一相减,计算最后的差值就是两张图片的距离。

本想打一下公式,但是换电脑还没装latex,所以就没有。

- 欧式距离(Euclidean distance)

欧式距离比较常见了,在二维/三维坐标系里我们以前怎么计算两个点的距离,现在就怎么算。

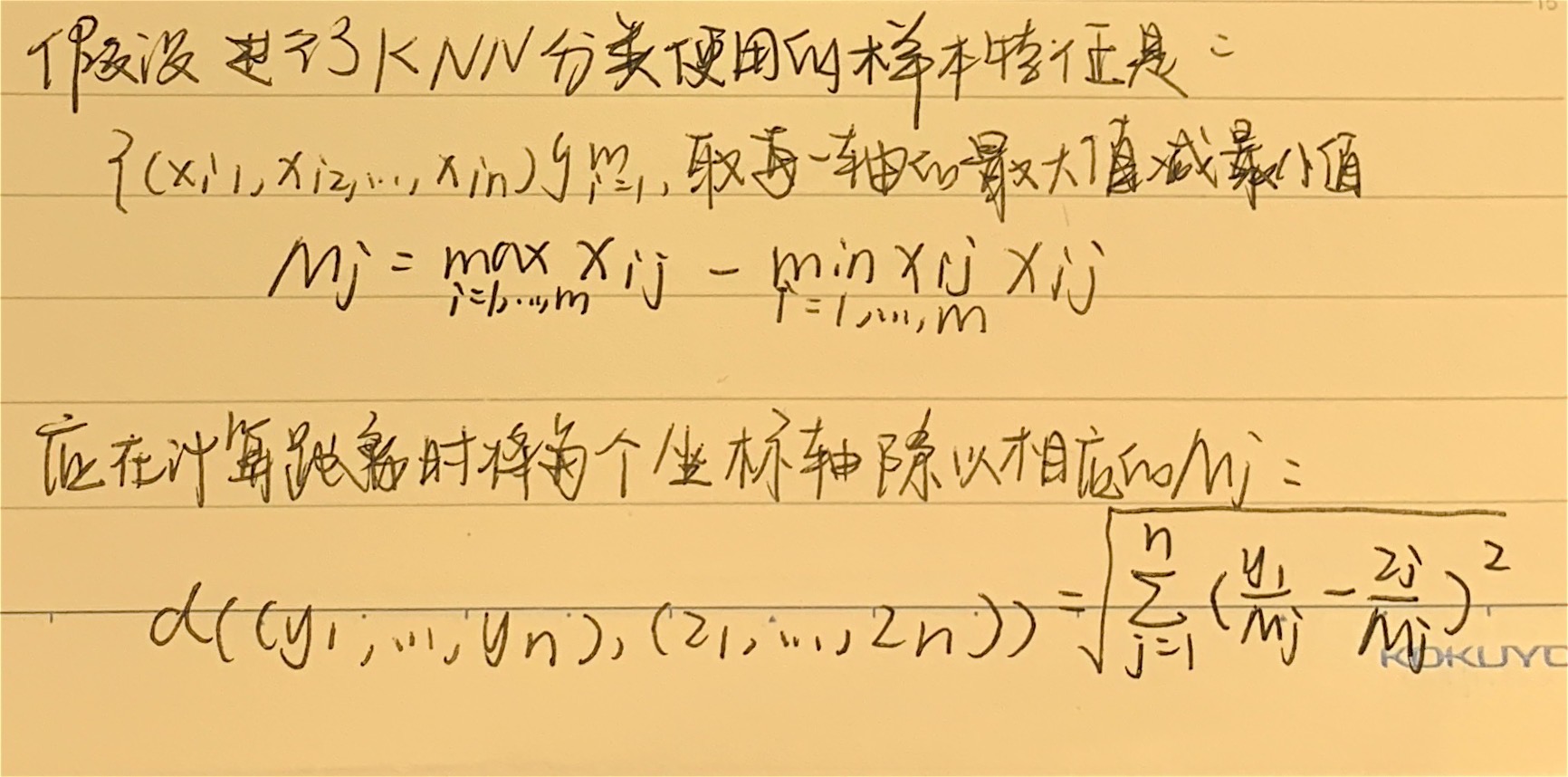

特征归一化

假如度量的数据有多个特征维度,例如:{x1, x2},特征x1的数据范围是[10,20],而x2的数据范围是[100,200]。

这就会导致计算方差时,x1的权重远高于x2,在距离中占更大比例,造成误差,所以有必要使每个特征的权重同等重要。

Reference:

-

Marsland S. Machine learning: an algorithmic perspective[M]. Chapman and Hall/CRC, 2014.